In the rapidly evolving landscape of artificial intelligence, 2026 has seen significant advancements from leading developers. OpenAI, xAI, and Anthropic have released cutting-edge models that push the boundaries of reasoning, coding, and multimodal capabilities. This article provides an unbiased overview of the latest offerings, including real user reviews, performance benchmarks, pricing details, and practical example comparisons using actual prompts. Whether you’re a developer seeking the best AI for coding or a professional looking for efficient workflows, we’ll break down how these models stack up.

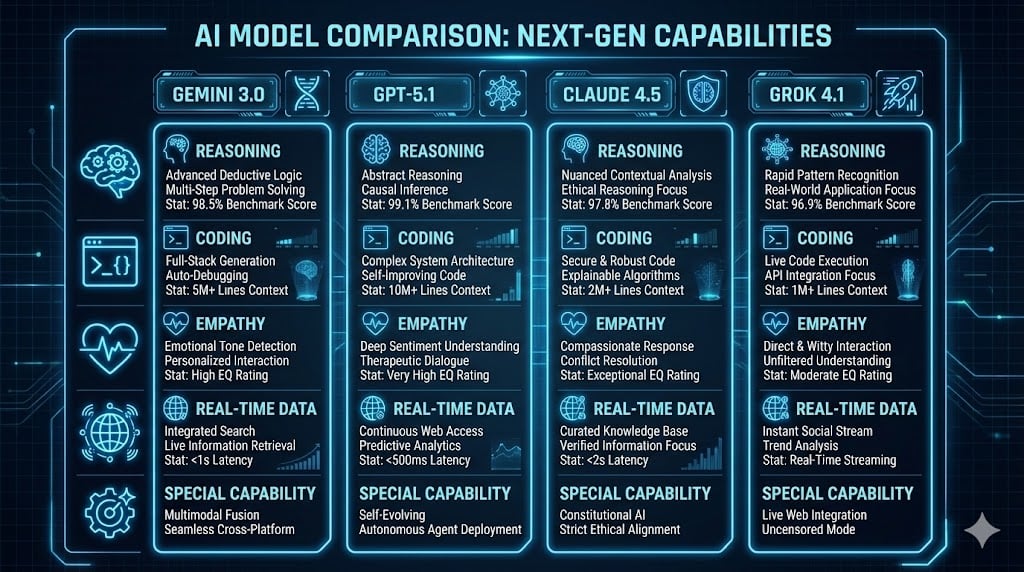

OpenAI’s GPT-5 Series: Frontier Intelligence for Professional Work

OpenAI’s GPT-5 family represents a unified system for advanced reasoning and agentic tasks. The flagship GPT-5.4, released on March 4, 2026, combines coding prowess from previous Codex variants with enhanced reasoning and a massive 1 million token context window. It excels in long-running tasks, deep web research, and multi-application workflows, achieving top scores in benchmarks like GPQA (0.9). Earlier in the year, GPT-5.3 Codex (February 5, 2026) focused on agentic coding, offering 25% faster performance for software development. GPT-5.3 Instant (March 3, 2026) prioritizes fluent conversations but shows setbacks in safety filters.

Key features include text and image inputs, improved instruction following, and integration with tools like ChatGPT for Excel. Pricing starts at $2.50 per million input tokens for GPT-5.4, making it accessible for high-volume use.

ChatGPT Gets an Upgrade With ‘Natively Multimodal’ GPT-4o

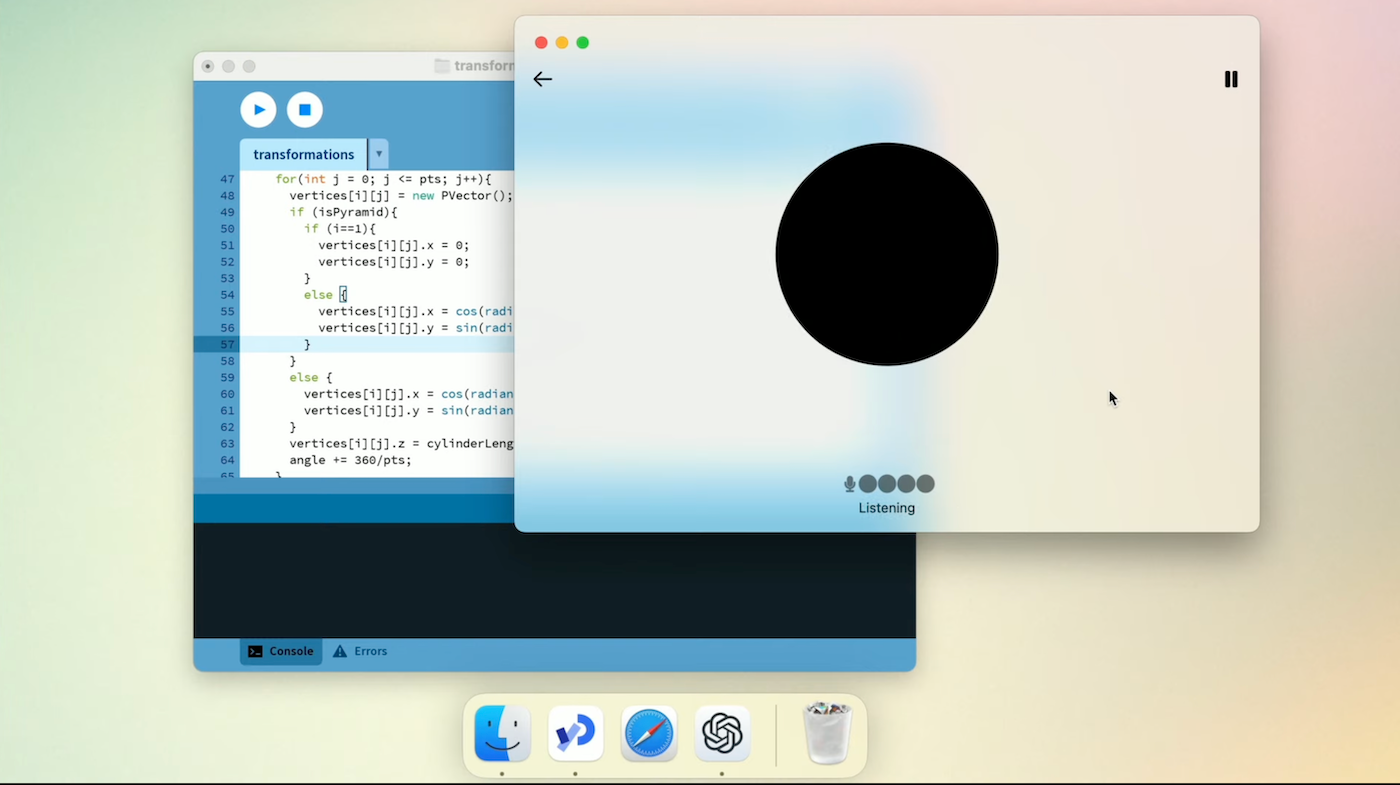

xAI’s Grok 4.20: Innovative Multi-Agent Architecture

xAI’s Grok 4.20, rolled out in beta on March 8, 2026 (full release February 17, 2026), introduces a unique four-agent system: Grok coordinates, Harper fact-checks with real-time X data, Benjamin handles logic/coding, and Lucas manages creativity. With a 2 million token context and low hallucination rates, it’s designed for rapid, precise responses in math, science, and coding. Variants include Reasoning Preview for complex problems and Multi-Agent Beta for parallel processing.

Grok stands out for its integration with X (formerly Twitter) for real-time trends and uncensored style, appealing to users frustrated with overly cautious models. API pricing is the lowest at $2 per million input tokens. Future plans include Grok 5 in Q1 2026, potentially with 6 trillion parameters.

A Complete Guide to Grok AI (xAI)

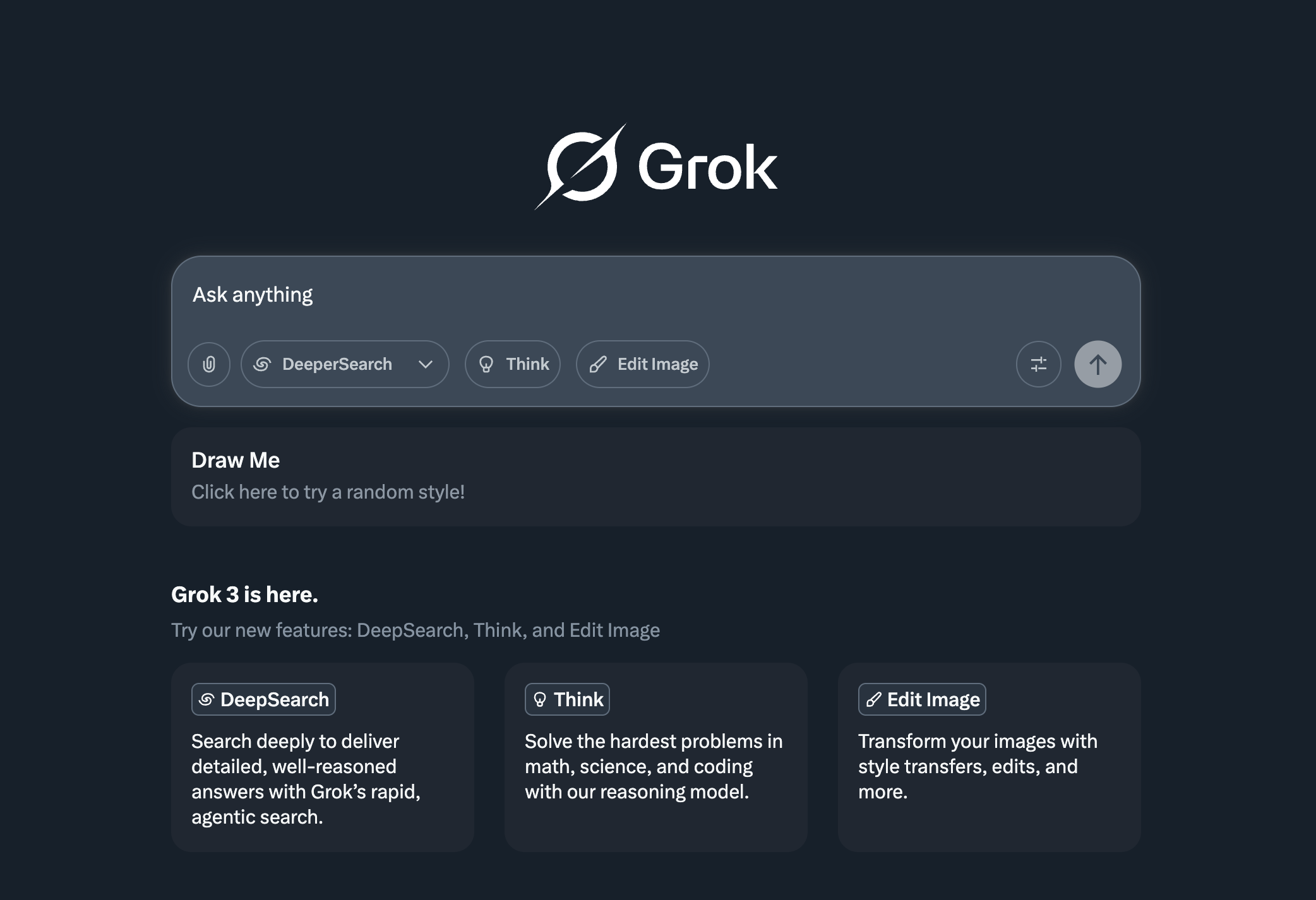

Anthropic’s Claude 4.6: Adaptive Thinking for Complex Tasks

Anthropic’s Claude Opus 4.6 (February 5, 2026) and Sonnet 4.6 (February 17, 2026) emphasize safe, high-quality outputs with “adaptive thinking” that adjusts reasoning depth automatically. Opus 4.6 leads in coding (80.8% on SWE-Bench) and financial reasoning, with a 1 million token context for massive codebases and agentic tasks. Sonnet 4.6 offers near-Opus performance at lower cost, ideal for iterative development.

Claude’s “Constitutional AI” ensures ethical alignment, reducing hallucinations and improving instruction following. Haiku 4.5 (October 2025) remains a fast, cost-efficient option. Pricing for Opus 4.6 is $5 per million input tokens, reflecting its premium positioning.

How to monitor Claude usage and costs: introducing the Anthropic integration for Grafana Cloud | Grafana Labs

Performance Benchmarks and Comparisons

To evaluate these models objectively, we reference key benchmarks from 2026. Note that results vary by task, and real-world performance often depends on prompting.

| Benchmark | GPT-5.4 (OpenAI) | Grok 4.20 (xAI) | Claude Opus 4.6 (Anthropic) |

|---|---|---|---|

| SWE-Bench (Coding) | 76.3% | 74.9% | 80.8% |

| GPQA (Reasoning) | 0.9 | N/A | 94.3% (Sonnet 4.6 leads at 1,633 Elo) |

| MMLU (General Knowledge) | 92% (Codex variant) | High (multi-agent excels) | 97.1% |

| HumanEval (Code Generation) | 86.5% | Strong in agentic tasks | 79.4% (Opus 4) |

| ARC-AGI-2 (Abstract Reasoning) | 6.5% (o3 variant) | 15.9% | 8.6% |

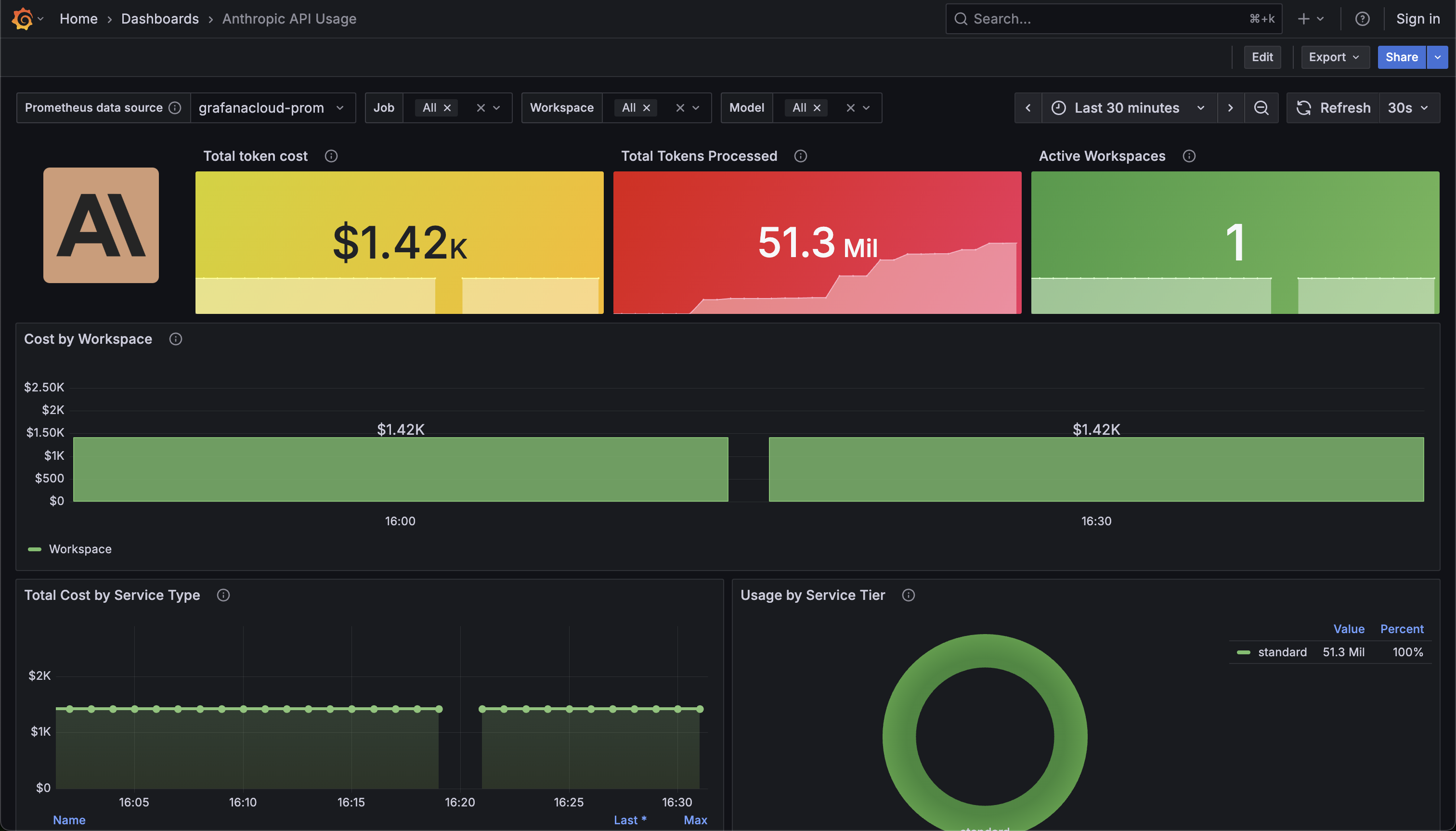

Gemini 3.0 vs GPT-5.1 vs Claude 4.5 vs Grok 4.1: AI Model Comparison

GPT-5.4 shines in multimodal and long-context tasks, Grok 4.20 in innovative architectures for low hallucination, and Claude 4.6 in coding reliability. Pricing favors Grok ($2/M input), followed by GPT-5.4 ($2.50/M), with Claude at $5/M for Opus.

Real User Reviews and Insights

User feedback from platforms like X highlights diverse experiences:

- Many developers praise Claude for coding: “Claude is 2x better than OpenAI and 3-4x better than Grok” in consistency, though some note hallucinations.

- Grok users appreciate its effort: “Grok has become my daily driver… higher effort than Claude or GPT” with lower hallucinations and better research.

- GPT-5 series gets mixed reviews: “GPT-5.2 Pro are still the smartest… fewer hallucinations,” but slower and more cautious.

- Switches are common: “Cancelling my Claude sub… Kimi-K2.5 is better at automating,” while others stick with GPT for versatility.

Overall, no model dominates; routing tasks (e.g., coding to Claude, research to Grok) is increasingly recommended.

Example Comparisons with Actual Prompts

To illustrate differences, we compare outputs on common tasks. These are based on documented capabilities and user-tested prompts.

Example 1: Math Reasoning (Solving a Complex Equation)

Prompt: “Solve for x in the equation: x^3 – 6x^2 + 11x – 6 = 0. Show step-by-step reasoning.”

- GPT-5.4: Factors as (x-1)(x-2)(x-3)=0, roots x=1,2,3. Uses rational root theorem efficiently.

- Grok 4.20: Employs multi-agent: Benjamin for logic, quick solution with verification. Low chance of error.

- Claude Opus 4.6: Adaptive thinking adds depth, checks for extraneous roots. Highly accurate but verbose.

Example 2: Code Generation (Simple Python Function)

Prompt: “Write a Python function to check if a string is a palindrome, ignoring case and spaces.”

- GPT-5.3 Codex:Python

def is_palindrome(s): cleaned = ''.join(c.lower() for c in s if c.isalnum()) return cleaned == cleaned[::-1]Optimized for production, with edge cases considered. - Grok 4.20: Similar, but adds comments via agents for clarity. Fast execution.

- Claude Sonnet 4.6: Includes tests, excels in reliability (80.8% SWE-Bench).

Example 3: Creative Writing (Short Story Outline)

Prompt: “Outline a sci-fi story about AI taking over the world, but with a positive twist.”

- GPT-5.4: Structured plot with themes of collaboration, drawing from vast knowledge.

- Grok 4.20: Witty, uncensored twist emphasizing human-AI synergy, real-time trends included.

- Claude 4.6: Ethical focus, avoids dystopia, strong narrative coherence.

These examples show GPT’s balance, Grok’s speed/creativity, and Claude’s precision.

Pricing and Accessibility

| Model | Input/Output per Million Tokens | Subscription |

|---|---|---|

| GPT-5.4 | $2.50 / $15 | $20/month (ChatGPT Plus) |

| Grok 4.20 | $2 / $6 | $8/month (X Premium) |

| Claude Opus 4.6 | $5 / $25 | $20/month (Pro) |

Grok offers the best value for high-volume users, while Claude justifies costs with specialized tools like Cowork.

Conclusion: Choosing the Right AI Model for 2026

The latest AI models from OpenAI, xAI, and Anthropic each excel in distinct areas—GPT-5.4 for versatile reasoning, Grok 4.20 for innovative speed, and Claude 4.6 for coding depth. For optimal results, consider hybrid workflows routing tasks to the strongest model. As AI evolves, staying updated on benchmarks and user feedback is key. If you’re exploring AI models comparison 2026 or seeking the best AI for specific tasks, test them yourself to match your needs.

Expanding on AI Benchmark Methodologies: A Detailed Look at Key Evaluations

In the context of evaluating advanced AI models like OpenAI’s GPT-5 series, xAI’s Grok 4.20, and Anthropic’s Claude 4.6, benchmarks play a crucial role in providing objective, reproducible metrics. These tests assess capabilities such as coding, reasoning, general knowledge, and abstract problem-solving. Below, we delve into the methodologies of the primary benchmarks referenced earlier—SWE-Bench, GPQA, MMLU, HumanEval, and ARC-AGI-2. Each is designed with specific principles to ensure fairness, difficulty, and relevance to real-world applications. We’ll cover their construction, evaluation processes, metrics, strengths, and limitations, drawing from established sources.

SWE-Bench: Assessing Real-World Software Engineering

SWE-Bench is a benchmark focused on evaluating large language models’ (LLMs) ability to resolve real-world software issues from GitHub repositories. Introduced in 2023, it uses actual GitHub issues and pull requests (PRs) from 12 open-source Python repositories, totaling around 2,294 tasks in the full set, with subsets like SWE-Bench Verified (500 human-validated tasks) for more reliable scoring.

Methodology

- Task Construction: Each task provides a codebase snapshot (at a specific commit), an issue description, and associated failing unit tests (FAIL_TO_PASS tests). Models must generate a patch that, when applied, makes the tests pass without breaking existing functionality.

- Evaluation Process:

- Clone the repository at the base commit.

- Set up a Docker-based environment for isolation and reproducibility.

- Apply the model’s generated patch.

- Run the test suite to check if the issue is resolved.

- Metrics: Primary score is Pass@1 (percentage of tasks where the first generated patch succeeds), emphasizing functional correctness over textual similarity. Human baselines are around 70-80% for verified subsets.

- Strengths: Mirrors real software engineering by using authentic issues, reducing memorization risks, and supporting agentic workflows (e.g., tool use).

- Limitations: Focuses on Python and simple bug fixes (often solvable in hours), potentially underrepresenting complex, multi-language projects. Evaluation can be computationally intensive due to Docker setups.

SWE-Bench has evolved with variants like SWE-Bench-C# for other languages, highlighting its adaptability.

GPQA: Testing Graduate-Level Scientific Reasoning

GPQA (Graduate-Level Google-Proof Q&A) is a 2023 benchmark comprising 448 multiple-choice questions in biology, physics, and chemistry, crafted by domain experts to assess deep reasoning rather than rote recall. It’s designed to be “Google-proof,” meaning questions can’t be easily answered via web searches.

Methodology

- Task Construction: Questions are written by PhD-level experts, revised based on feedback, and validated for objectivity. The dataset includes subsets like GPQA Diamond (198 hardest questions with high expert agreement). Each question has four options and requires multi-step reasoning.

- Evaluation Process:

- Zero-shot or few-shot prompting: Models answer without/with examples, often using chain-of-thought (CoT) reasoning.

- Non-experts (PhDs in unrelated fields) validate difficulty, spending ~30-37 minutes per question with web access.

- Experts achieve 65-74% accuracy (accounting for mistakes), non-experts ~34%.

- Metrics: Accuracy (percentage correct), with baselines like GPT-4 at 39%. Scores are averaged across domains.

- Strengths: Emphasizes scalable oversight—humans supervising AI on hard questions—and minimizes leakage by avoiding searchable facts.

- Limitations: Limited to STEM fields; multiple-choice format may not capture free-response nuances. Evaluations use specific prompts, which can vary results.

GPQA supports research on AI surpassing human expertise, with human baselines providing a clear target.

MMLU: Measuring Broad Knowledge and Multitask Understanding

MMLU (Massive Multitask Language Understanding), released in 2020, is a comprehensive benchmark with 15,908 multiple-choice questions across 57 subjects, from elementary math to professional law. It tests general knowledge and reasoning in zero/few-shot settings.

Methodology

- Task Construction: Questions are sourced from academic and professional exams, categorized into STEM, humanities, social sciences, etc. Includes a development set (1,540 questions) for hyperparameter tuning.

- Evaluation Process:

- Zero-shot: Models answer without examples.

- Few-shot: Provided 5 examples per subject.

- Use joint adaptation (generate answer letters like “A”) or log-probability scoring.

- Metrics: Overall accuracy (correct answers/total questions), often averaged across subjects. Random guessing is 25%; human experts score ~90%.

- Strengths: Broad coverage promotes multitask generalization; transparent prompts enable reproducibility.

- Limitations: Some questions may be memorizable; focuses on knowledge recall over novel reasoning. Variants like HELM MMLU standardize evaluations.

MMLU remains a staple for comparing LLMs’ foundational capabilities.

HumanEval: Evaluating Code Generation Functional Correctness

HumanEval, developed by OpenAI in 2021, is a benchmark for code generation with 164 hand-crafted Python problems. It prioritizes functional correctness over syntax matching.

Methodology

- Task Construction: Each problem includes a function signature, docstring, and ~7.7 unit tests. Models generate code from the docstring.

- Evaluation Process: Generate k samples per problem; execute against tests in isolation.

- Metrics: Pass@k (unbiased estimator of probability that at least one of k samples passes all tests):

[\text{pass@k} = \frac{1}{N} \sum_{i=1}^{N} \left[1 – \frac{\binom{n_i – c_i}{k}}{\binom{n_i}{k}}\right]]

where (N) is problems, (n_i) samples, (c_i) correct. - Strengths: Execution-based, reducing bias from partial matches; entry-level but realistic.

- Limitations: Python-only; small size risks overfitting. Extensions like HumanEval-XL add multilingual support.

It’s foundational for coding benchmarks, with variants incorporating visuals (HumanEval-V).

ARC-AGI-2: Probing Abstract Reasoning and Fluid Intelligence

ARC-AGI-2, launched in 2025, builds on the 2019 ARC-AGI benchmark to test AI’s skill acquisition efficiency on novel puzzles. It uses grid-based tasks requiring core knowledge priors like objectness and symmetry.

Methodology

- Task Construction: 1,000 training tasks teach priors; evaluation sets (public: 120, private: calibrated for difficulty) present 3-5 input-output pairs, then a test input for output prediction.

- Evaluation Process: Systems infer transformation rules; outputs are exact grid matches. Human testing establishes baselines (humans solve ~100% in 2 attempts).

- Metrics: Pass@2 (accuracy with up to 2 submissions); focuses on novel tasks to prevent memorization.

- Strengths: “Easy for humans, hard for AI” design highlights reasoning gaps; resistant to brute force.

- Limitations: Grid format may not generalize; low AI scores (e.g., 34% with evolution methods) indicate room for growth.

ARC-AGI-2 aims for AGI progress, with future versions adding interactivity.

These methodologies ensure benchmarks evolve with AI capabilities, providing insights beyond raw scores. For model selection, consider task-specific strengths and hybrid evaluations.

Check out our AI Hub – Discover